Hi there!

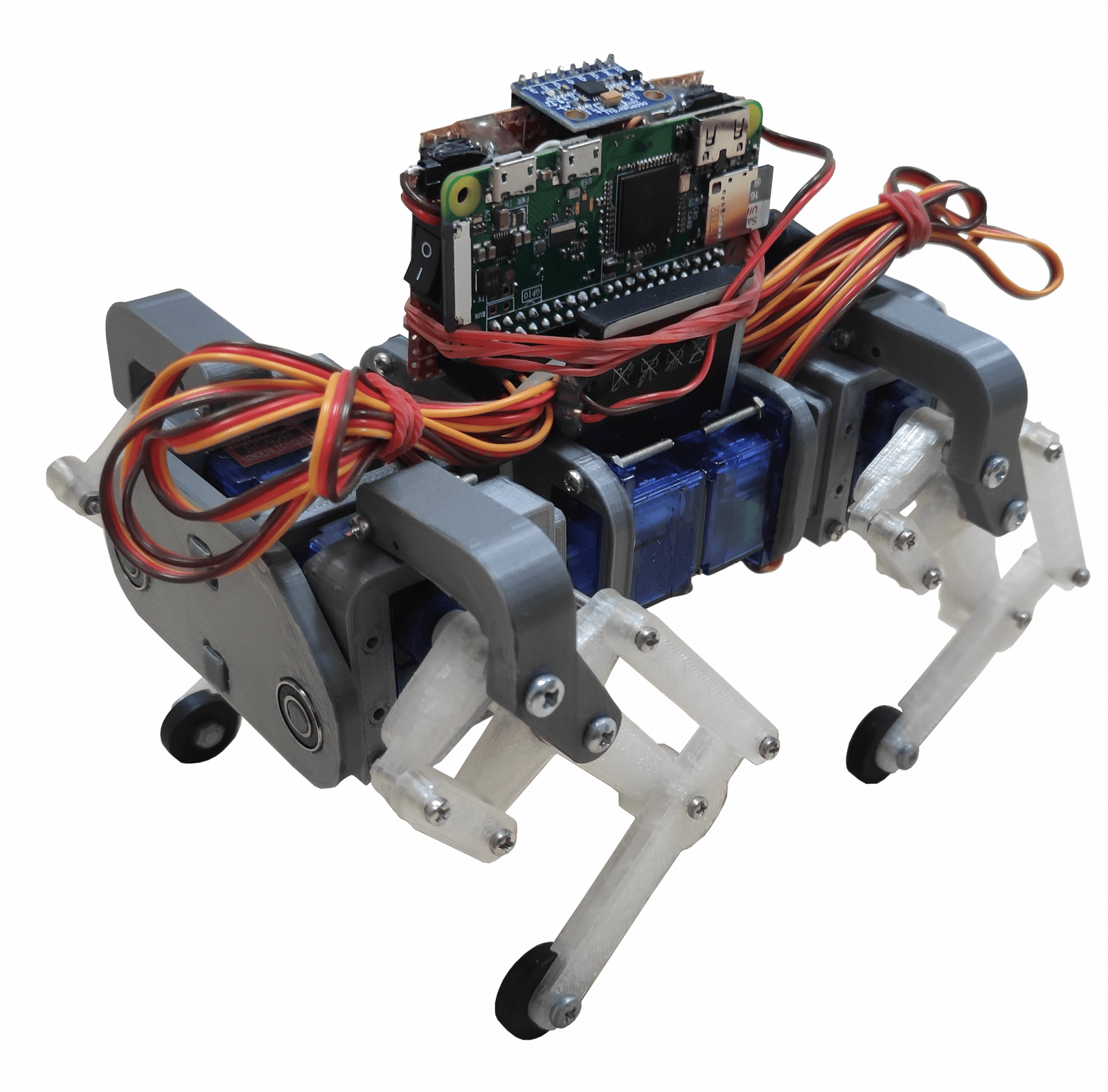

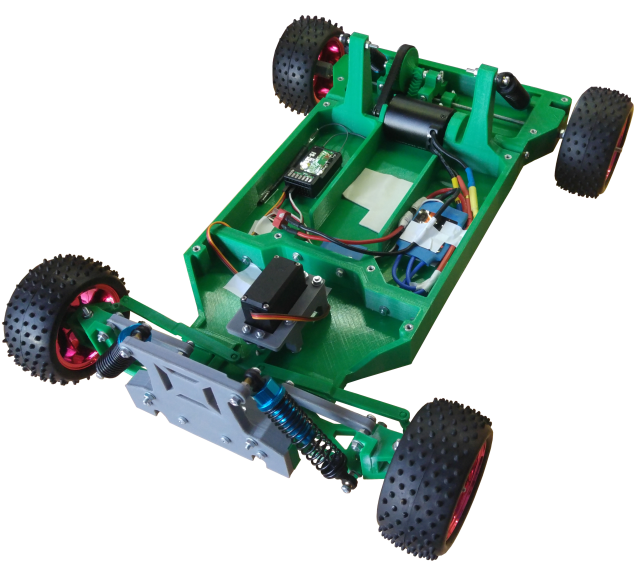

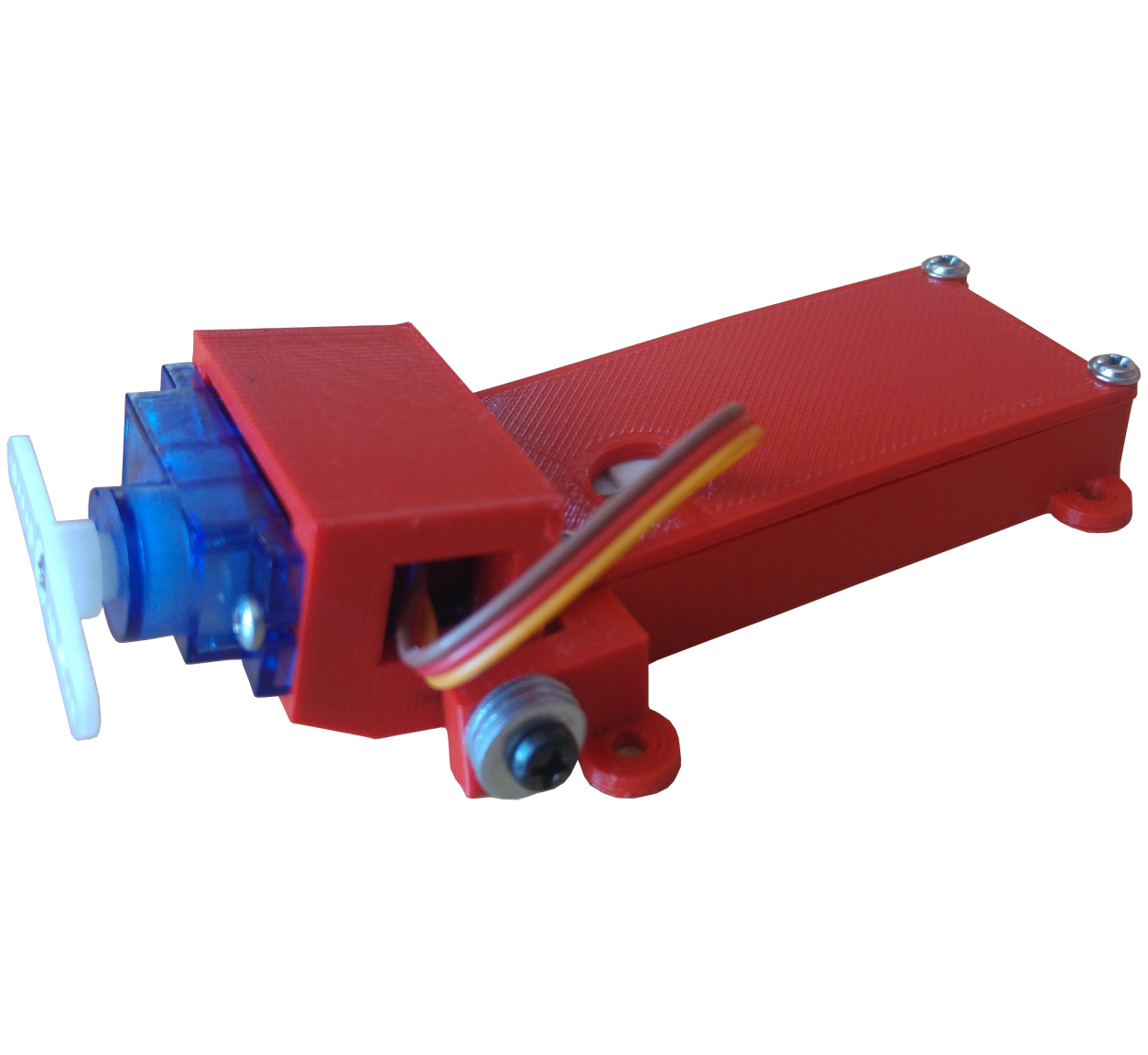

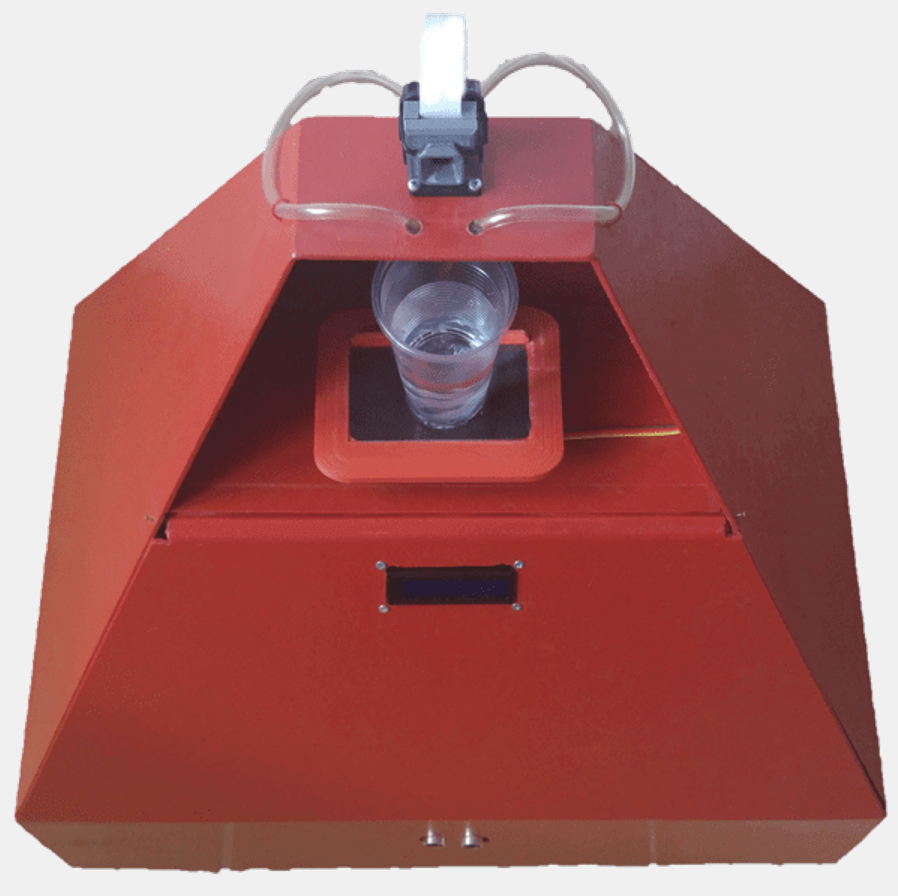

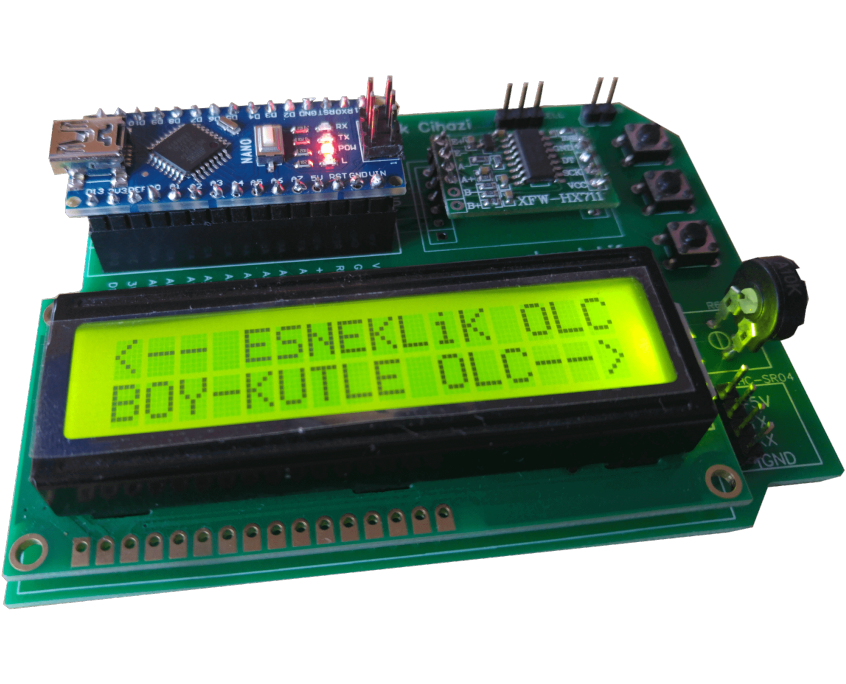

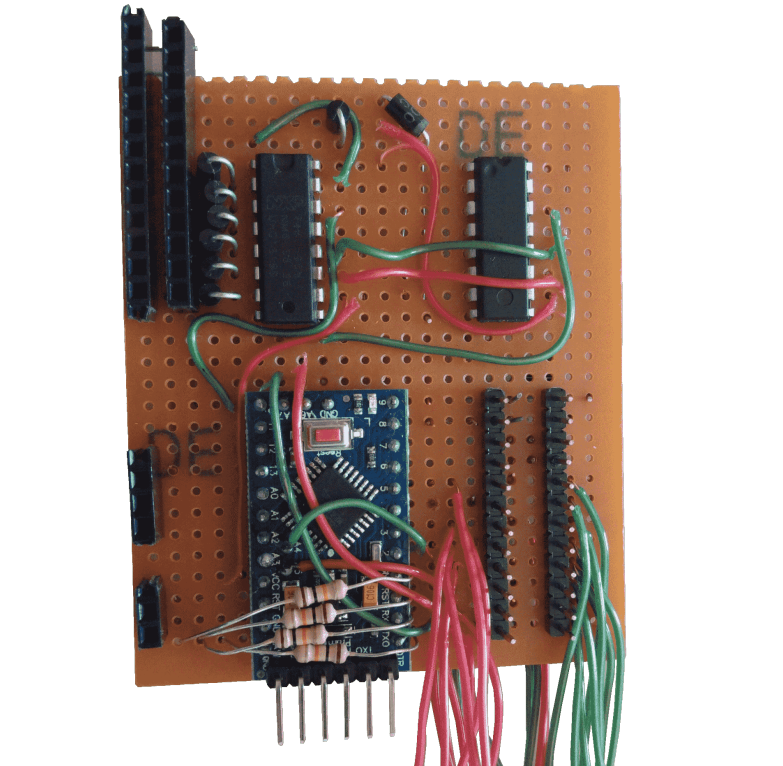

I’m Akif Kaya, currently studying at Boğaziçi University as a senior in Electrical-Electronics Engineering. I started as a tinkerer by tearing down my old toys and merging them with others. Then I learned about electronics, programming, and robotics. My passion for technology kept me inventing new robots and machines that help people. With the projects I created, I won various competitions. My passion comes from the soul of makers.

With any further questions, inquiries, or requests, feel free to drop me a message through email or Instagram at any time! I’m also based in Istanbul-Kocaeli, so meeting up with me regarding projects or collaborations would be fine with me too.

RESUME